From vibe coding to autonomous agent companies

Vibe coding accelerates production. Autonomous agent companies solve the problem of direction, style, and governance.

A team can today produce more software in a single afternoon than it could last year in a sprint. A founder opens Claude Code or Cursor, types a specification, accepts patches, runs tests, deploys a prototype. The speed is real. The direction remains murky. Two weeks later there is a working app without an audience, an internal tool without an owner, a dashboard showing metrics nobody acts on. The production problem has gotten smaller. The intent problem is still fully on the table.

That friction is now visible in nearly every AI-native team. Vibe coding has accelerated building. It has not determined what should be built, in what order, under which standards, and with what coherent judgment about quality. The LLM writes code. Someone still has to decide which code matters.

That is where the logic of autonomous agent companies begins.

The difference between producing and wanting

Software, content, and internal workflows consist of two layers. The first layer is production: writing, generating, testing, deploying, publishing. The second layer is intent: choosing what gets made, why it fits, how it sounds, which boundaries apply, and who has the final word when outputs conflict.

Vibe coding compresses the production layer. That effect is directly measurable in daily practice. A developer uses Claude Code for a refactor that used to take half a day. A marketer uses a model to generate three variants of a landing page in twenty minutes. A founder builds an admin panel without hiring a separate frontend developer. The threshold for creating something that works has dropped considerably.

That is a real shift. It only solves a limited problem.

A model has no built-in opinion about your company’s identity, your team’s quality bar, your publications’ style, or the order in which work should be done. A prompt can provide context. A prompt is not an organization. Every session starts from scratch, unless you build structure that preserves intent across tasks, people, and agents.

Once the cost of production drops, intent becomes more valuable. Previously, scarcity filtered bad ideas naturally. A bad idea cost months of engineer time, so it was killed earlier in discussion. Now a bad idea costs an afternoon. As a result, it gets much further into the system. The new bottleneck is no longer “can we build this?” but “should we build this, and who guards that answer over time?”

What an autonomous agent company is

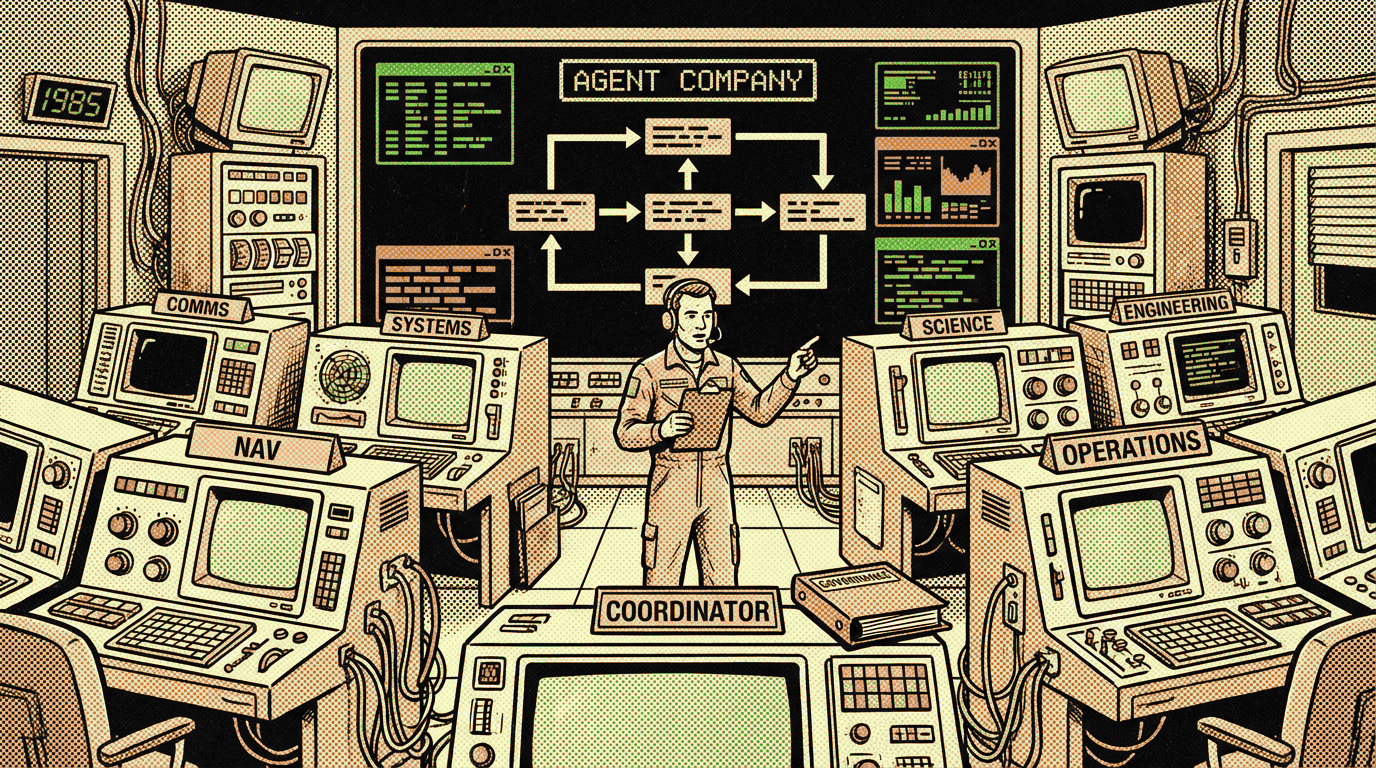

An autonomous agent company is a team of specialized AI agents that operates with roles, style guides, handoff moments, and governance. The unit of work shifts from the individual session to the organized system.

The basic components are simple.

Roles. Each agent has a defined domain. A writer drafts text. A chief editor reviews. An art director translates the core message into visuals. A publisher places and deploys. A coding agent maintains code. Roles reduce overlap and make responsibility visible.

Style guides. A style guide is externalized intent. It contains not only taste, but operational decision rules: tone, structure, forbidden patterns, criteria for approval. This means an agent operates not only on model capability, but within a fixed framework that persists across sessions.

Governance. Governance determines who may publish, who sends work back, who resolves conflicts, how many revision rounds are allowed, and when a human intervenes. Without governance, agent teams grow into a pile of loose outputs. With governance, an organization emerges.

That last point is underestimated. Many teams talk about agents as though they are improved scripts. That is too small a description. An autonomous agent company is not a script set. It is a formal work structure for intentional work. It organizes production, judgment, and handoffs in a single system.

The tooling landscape points in the same direction

The tools of 2025 and 2026 all point the same way. They do so at different levels of the stack.

Claude Code sits close to production. It is a strong coding agent for work in a project directory: understanding code, modifying, testing, iterating. This is the vibe coding layer at high quality. Claude Code accelerates building. It does not organize an entire company. It excels at execution, not at complete institutional structure.

OpenClaw moves one layer up. In the multi-agent documentation, OpenClaw describes one gateway with multiple isolated agents, each with its own workspace, its own agentDir, its own session storage, and its own bindings to channels. That detail matters. An agent gets its own memory, its own context, and its own auth profiles. That is not a convenient implementation detail. It is the technical prerequisite for role-consistent behavior. Once agents have their own workspace and state, they can carry different personas, different tools, and different responsibilities without leaking into one another.

CrewAI names the same shift explicitly. The documentation splits the system into Crews and Flows. Crews are teams of autonomous agents collaborating on tasks. Flows are the event-driven layer that manages state and execution. At the enterprise level, CrewAI adds agent repositories: a central library of standardized agents that teams can share and reuse. That is exactly how organizations stabilize intent: through standard roles and reusable definitions.

AutoGen uses different language, but the same pattern. Microsoft positions AutoGen as an event-driven framework for scalable multi-agent systems. The docs describe agents as software entities that exchange messages, maintain their own state, and perform actions with external effects. Once messages, state, and external actions become first-class concepts, the design shifts from promptcraft to organization design.

Cowork agents make the movement visible on the product side. Microsoft wrote on March 30, 2026 that Copilot Cowork became available via Frontier for long-running, multi-step work in Microsoft 365. That is no longer a regular assistant rewriting an email. It is a system for delegation of multi-step work in apps where work already takes place. The interface remains conversational. The underlying form moves toward teamwork.

These tools differ from one another. They share one direction: from loose model interaction to coordinated, multi-step, role-consistent systems.

Vic Boomer as a concrete example — and also as a boundary case

Vic Boomer is an essay-led AI studio at vicboomer.com. The writing part of Vic Boomer functions as an agent company. This deliberately applies only to the essay and publication part of the business. Products, solutions, and services are not executed by agents. That boundary matters substantively. An agent company works well where rules, style, handoffs, and review can be sharply defined. Not every part of a business meets that criterion.

The structure of the Vic Boomer agent company is explicit.

Kelsey Peters is chief editor. She coordinates assignments and reviews every essay. Her decision space is tightly defined: approve, send back, or reject. The editorial style guide allows a maximum of two revision rounds.

There are three writers with their own tone and function. Tom Notton is writer-pragmatic: compact, first-principles, technically clear. Martin Boomer is writer-strategic: he looks at market dynamics, positioning, and organizational implications. Eo Ena is writer-philosopher: he draws conceptual lines and exposes mechanisms beneath the surface. Three writers, three voice positions, one shared framework.

Noa Nakamura is art director. She works from a visual style guide with a VIC-20 color palette, halftone texture, 1980s settings, and twelve mood presets. An essay about agent orchestration then does not become a generic tech illustration, but a narrative scene with analog technology, central focus, and an image brief tied to the one_big_idea.

Saul Reimer is publisher. He places content in Hugo and deploys. That technical detail matters. Vic Boomer runs on Hugo, and the Hugo workbook determines where essays live, how frontmatter looks, which image paths are valid, and when status: published coincides with draft: false.

The editorial style guide is the core of the intent layer. It contains ten anti-patterns and an editor checklist. No hype language. No hedges. No “not X but Y” constructions. No rhetorical filler questions. No vague opening longer than three paragraphs. Every piece must begin with friction, have one Big Idea, and contain at least one concrete example. That document is not a writing aid. It is governance infrastructure. It makes a consistent studio possible across multiple agents.

Here you see why autonomous agent companies go beyond vibe coding. Vibe coding would let Tom Notton write faster. The Vic Boomer structure determines when Tom writes, how he writes, who corrects, how many times he may be sent back, how Noa translates it visually, and when Saul places it technically. The speed sits in the model. The direction sits in the company.

Two external examples showing where this is headed

The first external example is OpenClaw. OpenClaw shows how an agent company takes technical shape once multiple agents can coexist in a single runtime, each with its own workspace and session history. That makes role-consistent behavior achievable. A chief-editor agent does not need to share the same context as a publisher agent. A writing agent does not need the same auth profiles as a messaging agent. Once that separation exists cleanly in the runtime, it becomes possible to separate not just tasks, but also responsibilities.

The second external example is Microsoft Copilot Cowork. Microsoft positions Cowork as tooling for long-running, multi-step work in Microsoft 365. This shifts the product from assistance to delegation. That is an important transition. An assistant helps with one step. A cowork agent takes on a task across multiple steps, in multiple apps, over time. Add team-specific rules, escalation moments, and role boundaries to that, and you get the basic form of an autonomous agent company within a corporate environment.

A third example sits in CrewAI. The combination of Crews, Flows, and enterprise agent repositories shows that the market does not just want agents, but standardized teams of agents. Once companies need agent repositories, the conversation has shifted. It is no longer about one clever prompt, but about management of roles, standards, and reusable business logic.

Why this is the next step after vibe coding

Vibe coding made one insight inescapable: production was more expensive than necessary. Autonomous agent companies make the next insight inescapable: intent is too important to leave implicit.

The natural evolution runs in three steps.

First came the loose model interaction. A prompt, a response, a useful acceleration.

Then came vibe coding. The model shifted from conversation partner to executor in an iterative build loop.

Now attention shifts to organizational form. Who does what. Which rules apply. Where memory lives. How you prevent drift. When an editor corrects. How visuals carry the same core message as text. How a system preserves its identity across a hundred outputs.

That is the step from tool to business form.

The teams that do this well will talk less about prompts and more about constitutions. Less about “what can this model do?” and more about “what role does this model get, within which boundaries, with what handoffs?” The design question shifts from interface to governance.

There lies the practical takeaway as well. An autonomous agent company does not start with a model choice. It starts with an org chart and a style guide. First you define roles. Then you write the rules that make the roles governable. Then you choose tooling that can technically support that structure. Only then does it pay to optimize for model quality or price.

The next competition in AI will therefore be less about raw output and more about institutional precision. Those who have made production cheap must now make intent explicit. The winners are not building faster prompt factories. They are building companies of agents that know what their work is, where their boundaries lie, and how their output converges into a coherent whole.

Vic Boomer is an essay-led AI studio that turns ideas about AI, agents and software into clear analysis, working systems and practical tools.